I initially wanted to create a post that would get someone up-and-running with Windows Azure, start to finish, but the reality is that there is no “simple” app you can create. While you can create a simple data service, or a simple MVC web site, or even walk through the implementation of a simple worker role, there are many parts to an Azure application, each warranting their own series of posts.

However, I found an out: the Azure samples and training site. I have been around long enough to develop within most of the paradigms required for Azure development, so, with my head as big as it is, I didn’t want to go through a walkthrough that was dozens of pages long. There is something that is instantly gratifying about having a working app in front of you that you can poke and prod and change and learn to understand.

What this post is not

This is not a comprehensive walkthrough or a hand-holding exercise to get you up-to-speed in Azure development. You will not be a professional Azure developer ready for your first cloud app assignment.

I am not sharing the code as text in this post. If you’re going to follow along, you’re going to have to have the sample downloaded (the link is inline in the post). My assumption is that you’re able to navigate your way through a solution and find the code files I’m discussing.

What this post actually is

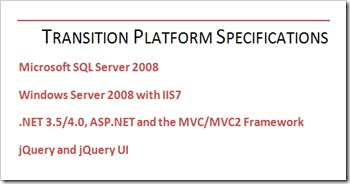

The first part of this post should take you less than 30 minutes and you will have PhluffyFotos running locally. The application makes use of Azure data services, workers, and the ASP.NET MVC 2 framework. It’s billed as fairly 2.0-ish, and has a functional Silverlight 2.0 slideshow viewer to boot.

The second part of the post breaks down some of the UX elements and explores some of the tech that powers said UX.

I have a fairly clean install of Window 7 with Visual Studio 2010 running on it, so there should be no surprises if you’re running with a similar setup. As I progressed through the procedure I have taken notes so that you should be able to replicate my steps. If you find that I have missed anything, please let me know!

Enable Azure tools through the Visual Studio 2010 Project Templates

Create a new project and select the category of “cloud”. Walkthrough the download, exit VS and install the tools. You can obviously omit this step if you’ve already setup Azure tools on your machine.

Download the sample app

This is located here on CodePlex. Unzip it to it’s default location.

Enable PowerShell Scripts for Scripts Signed by a Trusted Source

To do this, run PowerShell in administrator mode and use the following command:

Set-ExecutionPolicy RemoteSigned

Build PhluffyFotos

Open Visual Studio 2010 in administrator mode, then navigate to and open up the solution in the code directory. It’s located in the root of the folder you unzipped in the second step.

Do a CTRL+SHIFT+B on the solution to build out the bits.

Close down VS2010.

Provision Local Queues and Storage

This is required so that you do not have to sign up for an Azure account. At this time, only residents in the USA have the opportunity to create one (even for test purposes) without a credit card.

Open up a command prompt in administrator mode and navigate to your setup/scripts directory in the project folder. Type the following command and hit ENTER:

provision.cmd

You don’t technically have to run this from a command line, but if you just execute the script without a command line you’ll miss any feedback/error messages should any surface.

Run the App!

Finally, open Visual Studio 2010 with administrative privileges. Open the PhluffyFotos solution and press F5.

Create yourself an account and start uploading some images. You can try to navigate around the site, add additional albums and use the slide show.

This concludes the first part of this post, and you should be running the app without much fuss.

First Thoughts – Interface and Basics

There’s a lot to set up here, but keep in mind that we’re actually jumping into a project that essentially complete. You’re required to do all of the things a project would require as you develop it, but you have to do it all at once to get it setup.

The sample application isn’t robust enough to be production quality, but as a sample, that is to be expected.

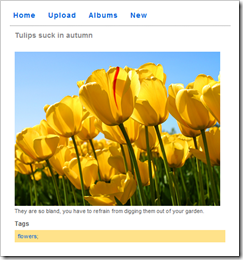

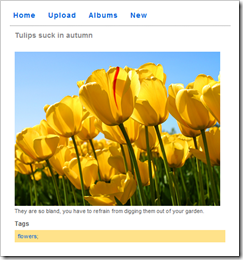

After uploading a new foto…erm…photo, the image does not seem to appear:

However, after navigating back to the same page through the album/photo selection process (or waiting several seconds and pressing refresh), the photo appears:

After deeper review, this seems to be as a result of the worker process not finishing the thumbnail generation prior to the site returning the view which requires the image. I don’t know if this has to do with local performance of the fabric at this point or other variables (such as the 2-second queue sleep in the config).

Another glitch (in my mind) would be that albums without photos are not represented in the interface until you have a picture. While this makes sense for people who don’t own the albums (you, visiting my profile), for the person who just created the album, it’s a little confusing.

For example, if I create an album called “My Cherished Family Photos”, then navigate to my list of albums, the newly created album is not there. If you then try to create a new one with the same name (assuming that the “add” didn’t take), the application just throws an exception.

Finally, and without an extremely deep dive, once you’ve selected an album to view, the MasterPage link to Home is broken, only ever returning you to the album cover and not your full list of albums. Either the link text should be changed, or the link, or both.

Interesting Technical Bits

I was a little worried when I first opened the app as there were several projects in the solution. As a first-stop for Azure exploration, this might be a little intimidating.

Let’s have a quick look at the breakdown here, but a little out of order:

- PhluffyFotos.WebUX

- Used to render the interface, this is an ASP.NET MVC 2.0 project. There are some very simple view models, the appropriate controllers and expected views and references to all the other projects, save Worker.

- There is a third-party component here as well, Vertigo.SlideShow, which is a Silverlight control used to display a slide show of the photos. It accepts an XML string of photo URLs and renders the images from the selected album on the site.

- AspProviders

- These are the ASP.NET role, membership, profile and session state providers that will be used with the application. This goes back to the beauty of ASP.NET 2.0 – we are still able to use the good bits in ASP.NET like authentication and authorization, but we can sub out our providers to use the back-ends that we like. In this case, it’s built on the Azure StorageClient and linked to the MVC site in the project with entries in Web.Config.

- PhluffyFotos.Data & PhluffyFotos.Data.WindowsAzure

- Data is where the models for the site – Album, Photo and Tag – are defined. We also have the definition of the IPhotoRepository interface which sets up the contract for creating and manipulating the above models, as well as searching for photos based on tags. A few other helper methods support some lower-level procedures such as initializing the user’s storage and building the list of photos for the slideshow.

- Data.WindowsAzure takes the models and Azurifies them, primarily by adding simple wrapper classes that inherit from TableServiceEntity and store the model objects as Azure-compatible rows. This project also contains the PhotoAlbumDataContext as well as conversion classes for moving the Azure version of the entities back to project models.

- PhluffyFotos.Worker

- The WorkerRole class in this project is the implementation of the abstract base RoleEntryPoint class for the Azure worker. There are OnStart, OnStop and Run methods common to all services. When run, it will execute one of three predefined commands: CreateThumbnail, CleanupPhoto or CleanupAlbum. This worker is executed until processing in all queues is completed.

- PhluffyFotos

- This is the project that defines the roles that are used in this Azure application: WebUX and Worker.

- The configuration defines the HTTP endpoints, the maximum number of instances of a role, and any custom settings that may be required for the roles.

- This is also the default start-up project for the solution. The magic of Visual Studio uses this project to ensure that the local development fabric is running then deploys the service and launches our web site (the default HTTP endpoint on port 80). Pretty slick.

The Azure Bits

After you get a good lay of the land, there’s not really too much going on to make it tick. As I implied, I thought it could be a little intimidating to get an Azure solution running if you needed five projects to make it tick. With a better understanding, that’s not really the case.

For all intents and purposes, the web UX site behaves as you would expect any MVC 2.0 site to behave. You have an album controller with an Upload view. When submitted, the photo is pulled from the forms collection and passed to the repository.

The Azure part comes in at this point: the photo’s on the way to the storage cloud after adding a new object to the data context. There are two parts to this, the table entry and the blob storage. This is great, as we can now explore this and know how to send binary objects to Azure storage.

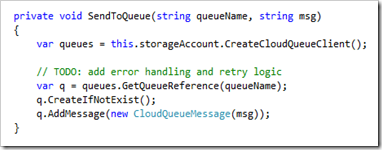

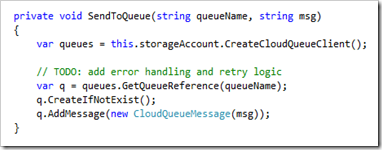

Next, the image properties are sent to the queue for further processing by this private method in the repository:

That worker role we saw earlier will now have some work to do with an image waiting to be processed. This queue behavior is similar to other bits in the project when albums or photos are deleted.

Next Steps

The one thing I would like to spend more time exploring is the provisioning of tables and queues in the cloud. The PowerShell script for queues is quite straightforward (a single call for each queue) and the table provisioning is only slightly more complex (a call is made to a method in the PhotoAlbumDataContext) but all this is done without explanation in this project.

Data and queue provisioning is part of the setup you perform to get running, but it’s also critical to the project working. PowerShell is fine locally, but how do you reproduce this creation in the cloud?

I also want to understand better some of the architectural decisions on this particular project…like, why is deleting a photo an operation that needs to be queued? Are blob storage operations inherently slow enough to justify this? Was it simply for demonstration purposes?

Wrapping Up

Creating this post was a great way to contextualize the basic components of an Azure application. Sure, I’ve created sites, services, models and setup endpoint bindings before, but not in the context of Azure.

I could have joined any team and crafted an MVC site that sits on top of a repository such as the one in the project, and I would never have to know skip-lick-a-beat about Azure – it is that seamlessly integrated into the solution – but I much prefer to get my hands dirty and learn about what’s going on behind the scenes.

I hope this post helps you navigate through the PhluffyFotos sample application and gives you a grounding in Azure-based cloud development.

But it’s all good…

But it’s all good…

In fifth grade I wrote a text-based choose your own adventure game on the Commodore 64 and brought my creation to school. My classmates could put their own names in and play along, choosing their way through my somewhat limited and unoriginal stories. I stood back in the computer lab and watched as they played; they were facinated! I remember my teacher, Mr. Pugh, came over and said, "You know, James, most of them won't understand what you've done."

In fifth grade I wrote a text-based choose your own adventure game on the Commodore 64 and brought my creation to school. My classmates could put their own names in and play along, choosing their way through my somewhat limited and unoriginal stories. I stood back in the computer lab and watched as they played; they were facinated! I remember my teacher, Mr. Pugh, came over and said, "You know, James, most of them won't understand what you've done."

North of the border we’ve gotten the shaft: Microsoft hasn’t released the Zune HD, nor the Zune pass service to Canada. The implications of the licensing issues, by the way, extend to the Xbox 360 where we Canucks have not been able to access the content library our brothers in America have been entitled to.

North of the border we’ve gotten the shaft: Microsoft hasn’t released the Zune HD, nor the Zune pass service to Canada. The implications of the licensing issues, by the way, extend to the Xbox 360 where we Canucks have not been able to access the content library our brothers in America have been entitled to.

The jQuery UI library provides the sortable() function which transforms the children of a selected element in the DOM to draggable, orderable items for the user to manipulate.

The jQuery UI library provides the sortable() function which transforms the children of a selected element in the DOM to draggable, orderable items for the user to manipulate.

How do you submit a list of the selected checkboxes back to an ASP.NET MVC controller action? It’s all too simple. In fact, the only reason I mention it here is to highlight some of the simplicity we inherit when we use the MVC Framework.

How do you submit a list of the selected checkboxes back to an ASP.NET MVC controller action? It’s all too simple. In fact, the only reason I mention it here is to highlight some of the simplicity we inherit when we use the MVC Framework.

What about if you have a complex type with properties that can’t be expressed with simple HTML form fields? What if there are enumerable types as properties on the object?

What about if you have a complex type with properties that can’t be expressed with simple HTML form fields? What if there are enumerable types as properties on the object?