So, we’re now into October and the year is passing quickly. The major function of my employment – helping the organization flip to a new operations platform – is nearing completion. As well, I have just wrapped up an 11 week series of articles with a publisher that I am very excited to share (but have to wait a little still!). The articles explain my rarity here on my blog, but I am glad to have some time to invest in this again…especially with the release of the MVC 3 framework!

What I Actually Do

The reality is, when it comes to inventory, billing, service and customer management, that when you flip the company’s software the data conversion is the easy part.

Often, it’s the process changes that can cripple the adoption of a new platform, especially when you’re moving from custom developed software and moving to an off-the-shelf product. Change can be very hard for some users.

I have the good (ha!) fortune here of working through both data and process transformations.

The Transition Platform

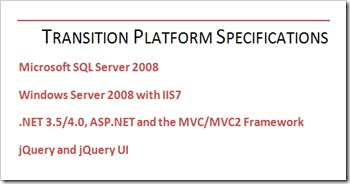

Being the only developer on the project – and in the organization – I do have the pleasure of being able to pick whatever tools I want to work and the backing of a company that pays for those tools for me.

Great progress has been made – albeit at times slower than other vendors in certain areas. But with Visual Studio 2010 (which I switched to halfway through the project) and the MVC Framework I was literally laughing at how trivial some of the tasks were rendered.

The vertical nature of a development environment and a deployment environment that are designed to work together make things even that much more straightforward.

It is important to note that my development over the last year was not the end to the means. What I produced was simply a staging platform that would facilitate a nearly-live transition to the target billing and customer management system. My job, done right, would leave no end-user software in use.

Onto The Problem with the Data

Not all data is a nightmare. A well-normalized database with referential integrity, proper field-level validation and the like will go a long way to helping you establish a plan of action when trying to make the conversion happen. Distinct stored procedures coupled with single-purpose, highly-reusable code make for easily comprehended intention.

Sadly, I was not working with any of these. The reality is that I was faced with the following problems opportunities that I had to develop for:

- There are over 650,000 records in 400 tables. This is not a problem in and of itself, and it’s not even a large amount of data compared to projects I’ve worked on with 10’s of millions of rows. It likely wouldn’t be a problem for anyone, unless they had to go through it line by line…

- I had to go through it line by line. Sort of. There were several key problems with the data that required careful analysis to get through like dual-purpose fields, fields that were re-purposed after 4 years of use, null values where keys are expected, orphaned records.

- The data conversion couldn’t happen – or begin to happen – until some of the critical issues were resolved. This meant developing solutions that could identify potentially bad data and providing a way for a user to resolve it. It also meant waiting for human resources that had the time to do so.

- The legacy software drove the business processes, then the software was shaped around the business processes that were derived from the software. This feedback loop lead to non-standard practices and processes that don’t match up with software in the industry (but have otherwise served the company well).

- Key constraints weren’t enforced, and there were no indexes. Key names were not consistent. There were no relationships defined. Some “relationships” were inferred by breaking apart data and building “keys” on the fly by combining text from different parts of different records (inventory was tied to a customer only by combining data from the customer, the installation work order and properties of the installer, for example).

- The application was developed in classic ASP and the logic for dealing with the data was stored across hundreds of individual files. Understanding a seemingly simple procedure was undoubtedly wrapped up in hundreds of lines of script, sometimes in as many as a dozen different files.

Mashing Up Data

The items listed above were all significant challenges in-and-of themselves, but the reality is that these are just a sample of the problems opportunities, from just one system. I had three to work with, and all were joined by a single,

Worse, the key was stored as editable text in all three systems. Because of a lack of role- and row-level security, someone working their second day at the company could change the key, switch keys between users. It was a little scary.

And I can’t imagine a manager in the world who likes to hear, “Hey, I’m going to just take three unrelated sets of data, mash them up and let you run your business on it, mmmkay?” Obviously a better approach was needed.

Now, Here’s How I Got Through

It took over a year, but I am now close enough to the finish line that I could throw a chicken over it. In post-mortem fashion, I’ll talk about each of the challenges I had to work through, and how I tackled them over the next few posts.

Stay tuned for: Ill-Formatted Data

No comments:

Post a Comment